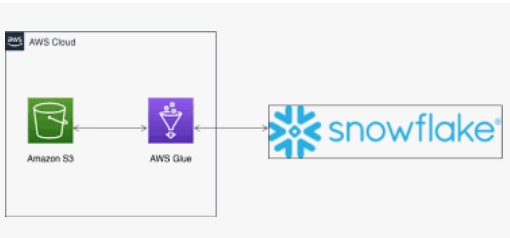

During the last post we discussed how AWS GLUE has masked the PII information in FEED file. After masking, a new file got generated and kept in the S3 bucket. In continuation of the same process, we need this file to be ingested inside the Snowflake. We know there can be multiple way to process the file e.g. Snowpipe, Conventional COPY command etc. However, for our USE case we will connect AWS GLUE with SNOWFLAKE and ingest the file via GLUE aka SPARK code.

Integrating the two solutions enable customers to manage their data ingestion and transformation pipelines with more ease and flexibility. With AWS Glue and Snowflake, customers get the added benefit of Snowflake’s query pushdown which automatically pushes Spark workloads, translated to SQL, into Snowflake

To connect GLU with SNOWFLAKE, following Prerequisites needs to be in place.

- Two Jars file.

- A Bucket in AWS region

- Copy the above JAR files to the S3 bucket.

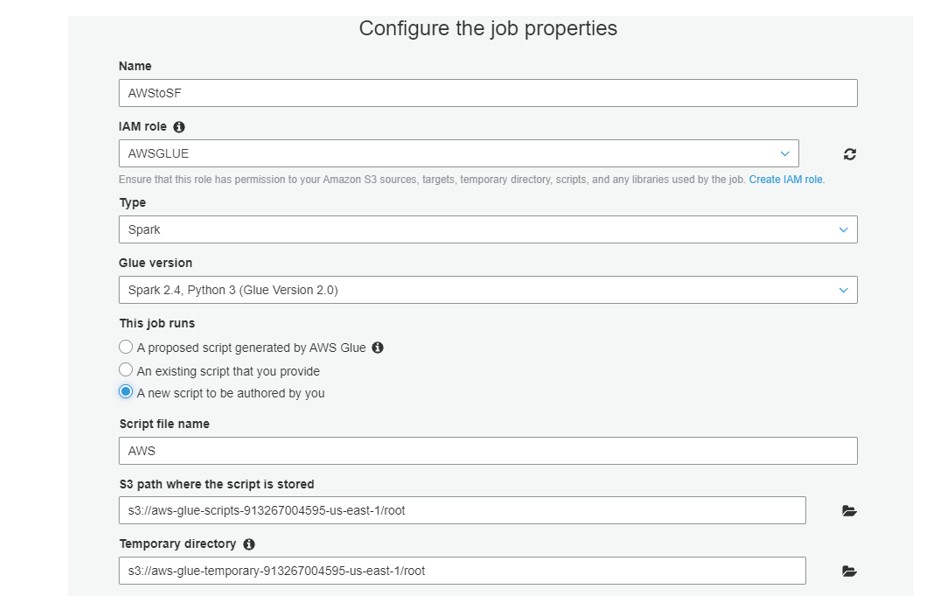

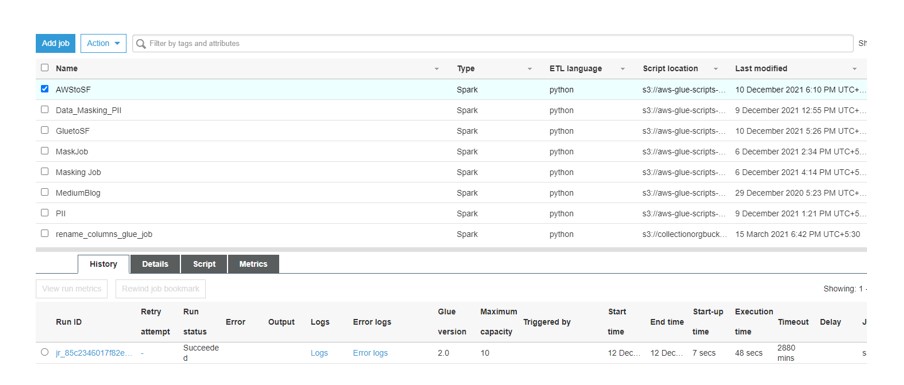

Please see the below steps we have performed in AWS Glue to connect with Snowflake:

- Goto AWS Glue Service and create a new JOB.

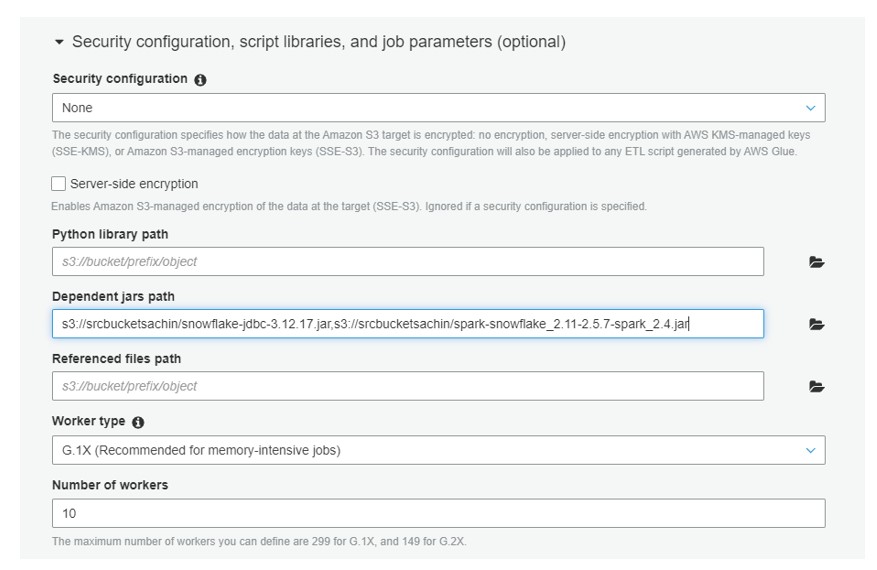

- Expand Script libraries and job parameters:

Under Dependent jars path, add entries for both .jar files

- Click Next again, then click Finish and prompted with a blank script interface.

- Paste the following Script in the Interface.

from pyspark.sql import SparkSession

from pyspark import SparkContext

spark = SparkSession \

.builder \

.appName("GLUEWITHSF") \

.getOrCreate()

def main():

SNOWFLAKE_SOURCE_NAME = "net.snowflake.spark.snowflake"

snowflake_options = {

"sfUrl": "https://cia06334.us-east-1.snowflakecomputing.com/",

"sfUser": "sachinsnowpro",

"sfPassword": "xxxxxxx",

"sfDatabase": "DEMO_DB",

"sfSchema": "PUBLIC",

"sfWarehouse": "COMPUTE_WH",

"sfRole" : "ACCOUNTADMIN"

}

df = spark.read.csv("s3://gluemaskingdestfeed/Invoice_mask.csv")

df.write.format(SNOWFLAKE_SOURCE_NAME) \

.options(**snowflake_options) \

.option("dbtable", "GLUE_TBL_DF").mode("overwrite") \

.save()

main()

- Save and Run the Job.

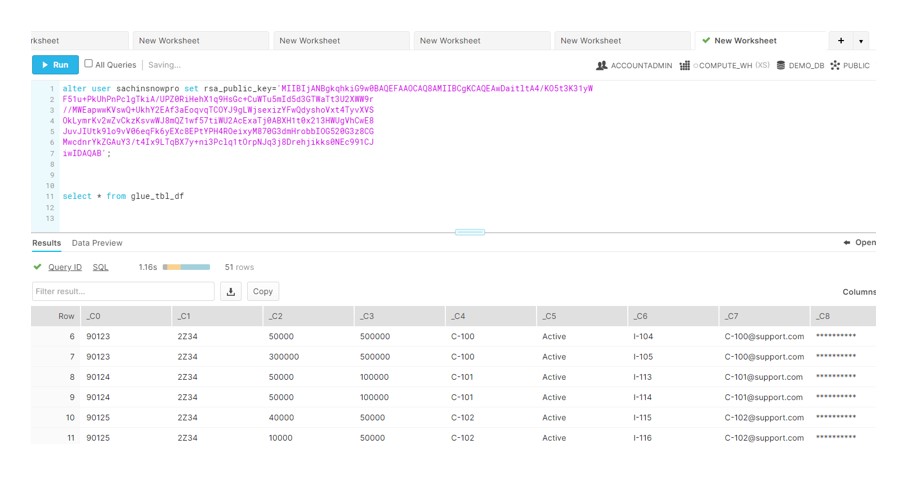

- Login to the Snowflake and you see data gets ingested into the Table: