Today one of my friend discussed requirement where customer was adamant to send only zip file this file needs to be ingested into the snowflake. We implemented this via Zip Python Connector.

As we know snowflake supports only below format.

i.e. CSV,JSON, AVRO,ORC,PARQUET, XML and does not support the other file so in order to ingest this file into snow flake.

I have used Zip Python Connector where we have unzip the file and ingest this into Snowflake without manual intervention.

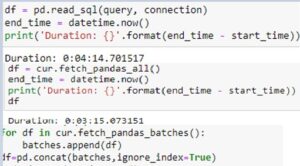

The Zip Python Connector uses to_sql method to insert rows of data , which inserts row by row in dataframe ,

which is fine for smaller DataFrames, but not for huge dataset.

In order to consume the zip file we also need to consider the performance aspect. As it is possibility that zip file is huge and using the to_Sql method will kill the performance.

Function to_sql creates entry line by line instead of Batch upload.

Hence below are the some Performance Improvement suggestion we we can incorporate in our code to avoid any latency issue.

· First should Upload the file to internal location of machine. Please note should be taken care by only code no manual intervention is required in doing so.

· Run the COPY command in Snowflake to load the data, which insert the data in bulk fashion.

Though other programmatic language are also supported but for this use case implemented Python.

import os

import boto3

import pandas as pd

import json

import sqlalchemy

import zipfile

import snowflake.connector

from snowflake.sqlalchemy import URL

from sqlalchemy import create_engine

import io

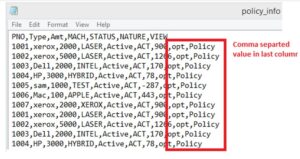

with zipfile.ZipFile("policy_info.zip") as z:

with z.open("policy_info.csv") as f:

train = pd.read_csv(f, header=0,

delimiter=",",names=['POLICY_ID','MODEL','AMT','TYPE','STATUS','DAYS','OPT','POLICY','AGG'])

print (train)

engine = create_engine(URL(

account='fpa95179.us-east-1',

user=xxxxxxx,

password=xxxxx@2020',

warehouse='COMPUTE_WH',

database='DEMO_DB',

schema='PUBLIC',

role='ACCOUNTADMIN'

))

connection = engine.connect()train.to_sql(‘policy_info’, con=engine, if_exists='replace',index=False)

connection.close()

engine.dispose()