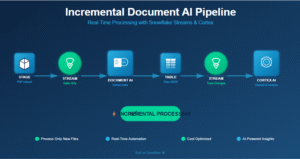

In this discussion, we’ll explore Snowflake’s newest feature: Streams on Consumer Account. As we know data sharing allows organizations to securely share data with other Snowflake accounts without the need to copy the data. Data provider shares specific data securely, and data consumer queries this shared data in real-time, empowering data-driven decision-making. Any changes made by the provider to the shared data are immediately available to the consumer while concealing the specific operations performed on the shared data.

However, imagine a situation where the consumer needs to track DML operations on the shared data by the provider, such as new record insertions, updates, or deletions. Later on, the Consumer has to maintain the audit of all the Delta changes that happen to the shared data for analysis and pattern recognition

For instance, consider a scenario where a customer table shared with a consumer initially contains 100 records. On the following day, the provider adds 10 new records and updates some existing ones. While the consumer can instantly view these changes, it becomes challenging to identify the specifics of the new records or the modifications made to the existing ones

To overcome this issue, Snowflake has introduced “Streams On Shared Views Or Tables”. Creating streams on shared objects in the Consumer account enables monitoring of the data manipulation language (DML) alterations made within those objects.

Note: To establish streams on shared tables or secure views, enabling change tracking on either the shared tables or the underlying tables for a shared view is necessary.

To get more understanding we will see the following technical implementation:

Technical implementation:

Provider Account:

Say we want to share Customer table with Consumer Account:

Steps:

create share shared_customer;

grant usage on database sales to share shared_customer;

grant usage on schema sales.public to share shared_customer;

grant select on table sales.public.customer to share shared_customer;

alter share shared_customer add accounts=RXB36973;

ALTER TABLE customer set CHANGE_TRACKING = TRUE;

Consumer Account:

- Create shared DB from Provider.

create database SHARED_DB from share PTXMHSZ.KUB39045.shared_customer;

use SHARED_DB;

show tables in database;

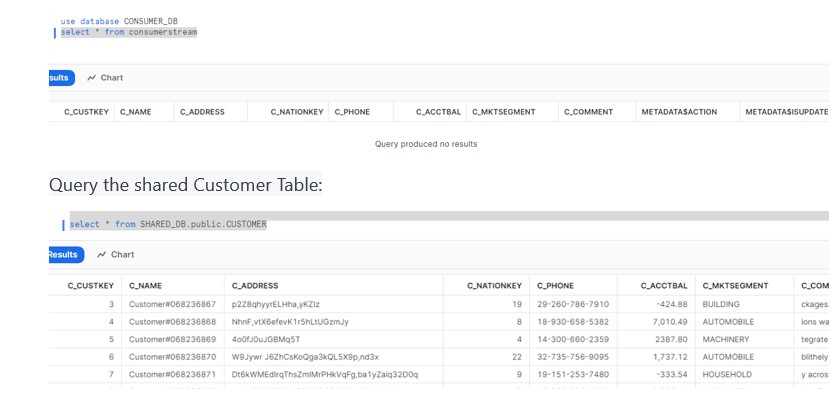

- Create own Database and Stream in consumer account.

create database CONSUMER_DB;

create stream consumerstream on table SHARED_DB.public.CUSTOMER;

select * from consumerstream; //Stream would be empty

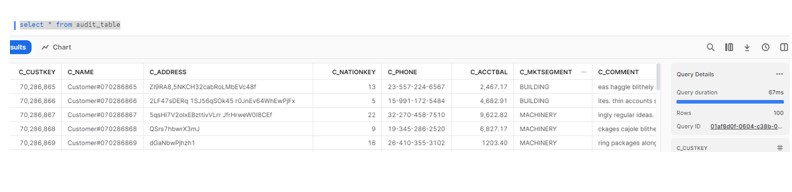

- Create an Audit table to store the records from Streams for later analysis.

create or replace TABLE CONSUMER_DB.PUBLIC.AUDIT_TABLE (

C_CUSTKEY NUMBER(19,0),

C_NAME VARCHAR(25),

C_ADDRESS VARCHAR(40),

C_NATIONKEY NUMBER(38,0),

C_PHONE VARCHAR(15),

C_ACCTBAL NUMBER(12,2),

C_MKTSEGMENT VARCHAR(10),

C_COMMENT VARCHAR(117),

METADATA$ACTION VARCHAR(10),

METADATA$ISUPDATE VARCHAR(10),

METADATA$ROW_ID VARCHAR(100)

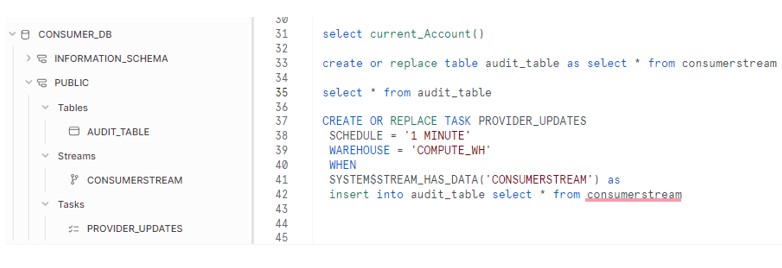

);- Create TASK to insert into AUDIT table from Stream. The TASK will check if STREAM has data, it will spin warehouse and inserts into AUDIT Table.

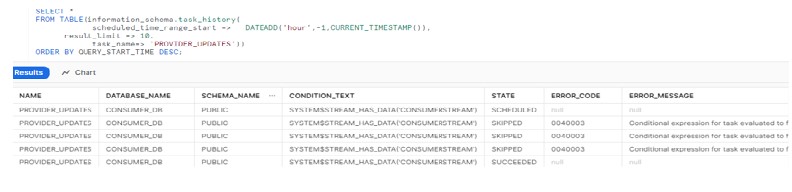

CREATE OR REPLACE TASK PROVIDER_UPDATES

SCHEDULE = '1 MINUTE'

WAREHOUSE = 'COMPUTE_WH'

WHEN

SYSTEM$STREAM_HAS_DATA('CONSUMERSTREAM') as

insert into audit_table select * from consumerstream;

ALTER TASK PROVIDER_UPDATES RESUME;

So we have Streams, TASKS and Table in CONSUMER_DB:

In Provider Account:

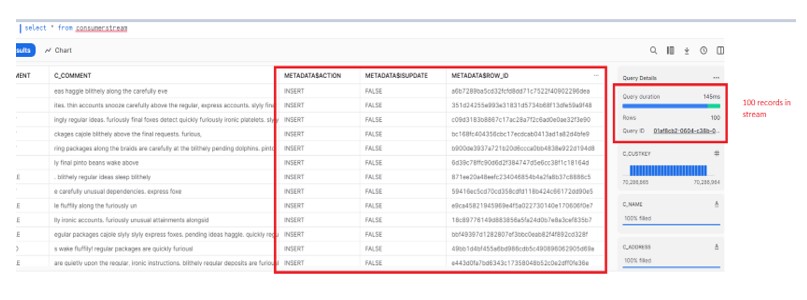

Insert 100 records into Customer table:

insert into customer select * from SNOWFLAKE_SAMPLE_DATA.TPCH_SF1000.CUSTOMER limit 100;

Consumer Account:

Verify the data in Stream:

select * from consumerstream;

Verify the data in Audit table.

Verify the Task History:

In Provider:

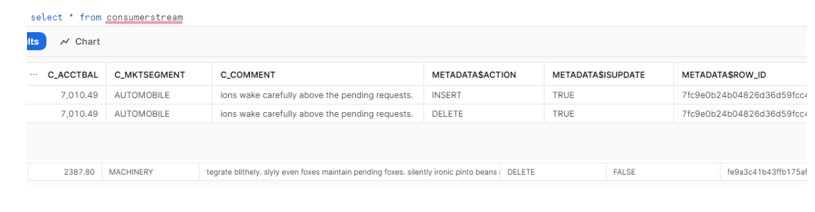

Update the record and observe the behavior in Consumer:

Delete from customer where c_custkey = 5;

update customer set c_nationkey = 12 where c_custkey = 4;

Consumer:

Task gets executed successfully and it has inserted below three records into Audit table lead to the count 103 records.